I’ve been poking around in the McLain et al 2018 paper for a while now, particularly after having Dr. McLain on our podcast, Beyond the Basics. I cannot possibly cover all the baraminology, let alone the paleontology of the 45 page technical paper in one blog so this will be a running series. What I’ve done for today’s blog post is taken a single dataset, the first one discussed in in the 2018 paper from Brussatte et al (2014). I ran it through both BDISTMDS and BARCLAY. What follows is what I discovered. Note it will take multiple parts to cover this single dataset and the other datasets will take even longer.

One of the critiques I had of the McLain et al paper was based on procedure. They failed to report bootstrapping values. Having run the Brusatte dataset, I can see why. The bootstrap values vary wildly. In many cases, they are 100, supposedly indicative of good data. However, many times the numbers are much lower than that. Many bootstrap values are sub-70% and some are as low as 40%, indicative that the data might be bad. This is true in both BDISTMDS and BARCLAY. Based on what I’ve seen with the dataset, this analysis will be borne out as we dig further into this. McLain et al probably should have at least mentioned this in passing, but they did not. Now the paper is mammoth so I understand why they may have felt the need to abbreviate, but bootstrapping is part of the model, however much I disagree with it, and should have been mentioned.

My next step was to run the dataset at a 95% character relevance cut off. My interest in doing so was because its is only in the non-living organisms that the statistical baraminology programs use the 75% cut off. For living organisms, they use 95%. So I wanted to see what using a higher cutoff would do to the data. After several attempts, I gave up. Neither BDISTMDS nor BARCLAY would process it at 95% relevance. That concerns me slightly. I understand the pragmatism behind not using a 95% cut off for fossils. They are often fragmentary and incomplete. But lowering the relevance cut off introduces more noise and frankly, might help explain why the graphs below are the way they are.

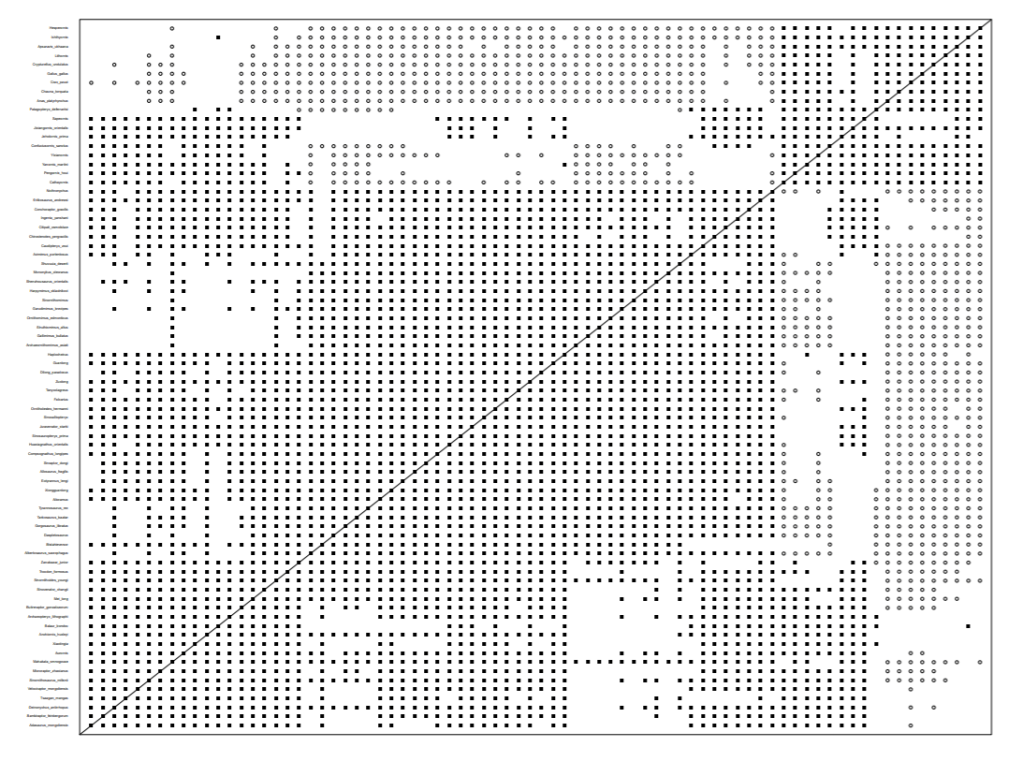

Having failed at that, I attempted to replicate the graph presented in the McLain et al 2018 paper. It differed substantially. That does not surprise me completely, since I did not adjust taxic relevance as McLain et al did.

I then ran the same dataset through Barclay. The result is below. Not all that different as you can see.

Note there are some differences, likely due the different statistical coefficients used by the programs. However, the same word applies to all of them: messy. The multidimensional scaling, which is supposed to help elucidate groups, is also utterly useless. Both MDS plots show one tangled mess with no clear groups.

As you can see by dragging across the screen, the MDS plots are close to identical and show absolutely nothing. At this stage, before I begin making cuts to the dataset to imitate the McLain et al paper, it is worth pointing something out: more taxa is not always better. Of the over 800 characters in the Brussatte dataset, only just over 100 were used. Having a ton of taxa with few overlapping characters seems to cloud the analysis.

In the next post in this series, I will trim down the dataset to match the work that McLain et al did in their paper. However, I will point out here before so doing that trimming down the dataset in the manner they did opens them to the charge of data manipulation. In other words, someone could argue (I’m not), that they did not like the results so they chose to cherry pick data to get results they preferred. I don’t for a second think that’s why McLain et al pared down the dataset. I agree with them, it’s too big, with too many unrelated taxa, which are clouding the results. But there is no empirical reason for them to have done so ( at least as far as I can see). I mean no disrespect to Dr. McLain or his colleagues in analyzing this paper. I simply do not think their conclusions are accurate and I want to work through how they reached those conclusions in order to see where the errors are and how they could be corrected. Part two to follow.

Do you know what’s going to happen when you die? Are you completely sure? If you aren’t, please read this or listen to this. You can know where you will spend eternity. If you have questions, please feel free to contact us, we’d love to talk to you.